What Is the Orchestration Tax? The Hidden Cost of Multi-Agent AI in Enterprise Sales

TL;DR

Multi-agent AI platforms create a hidden layer of reconciliation work (the orchestration tax) when their specialized agents disagree. For high-volume SMB sales, this overhead is manageable. For enterprise deals where relationships are irreplaceable and context is everything, it's a liability. This post breaks down where the tax shows up, why it compounds, and what questions to ask before you buy.

When your research agent identifies one priority, your content agent writes to a different angle, and your CRM shows conflicting data — who wins?

This isn't a hypothetical. It's what happens every day inside multi-agent AI sales platforms. And the answer to that question (who reconciles the conflict) reveals whether the AI you're buying is actually saving you work or just moving it somewhere less visible.

Key Terms

Orchestration tax: The hidden layer of reconciliation work created when multiple AI agents disagree. In a multi-agent system, agents optimized for different tasks regularly produce conflicting outputs. Someone — human or machine — has to resolve those conflicts before anything ships. That resolution layer is the orchestration tax. It wasn't in the demo. It's in your workflow now.

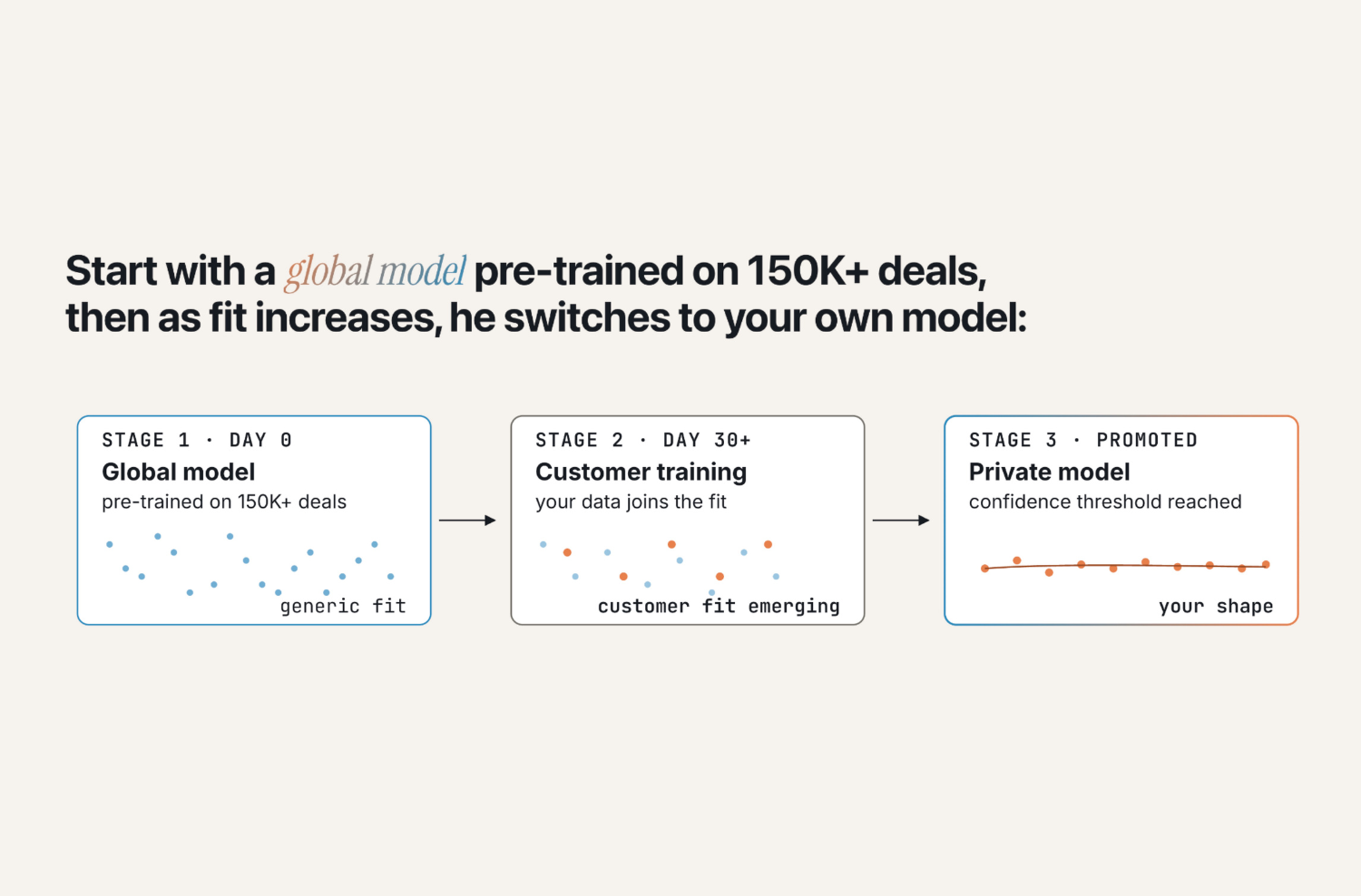

Multi-agent orchestration: An AI architecture where separate agents handle separate tasks and an additional layer coordinates their outputs. Each agent is optimized for its narrow function and operates without shared context across the others.

Single-agent architecture: One AI with full context across every meeting, email, CRM update, and document in a deal. No reconciliation layer because there's nothing to reconcile — the agent makes judgment calls from a complete picture.

White box AI: Systems where the reasoning is visible. You can see why the system recommended a specific play, not just what it recommended. Essential for enterprise deals where your rep needs to explain and defend the approach.

Black box AI: Autonomous systems that produce outputs without visible reasoning. Appropriate for high-volume, low-stakes sales where speed matters more than explainability.

Snapshot vs. video: The difference in how AI systems understand a deal. Multi-agent systems work from snapshots — static data optimized for each agent's narrow function. Single-agent systems work from a video — time-series data that shows how the deal has changed, who said what, and what's actually working.

The Orchestration Tax

Most AI sales platforms are built like committees. A research agent gathers intelligence. A content agent drafts messaging. An outreach agent handles sequencing. Each one optimized for its narrow function, operating in isolation.

Committees need project managers. When agents disagree — and they will — someone has to orchestrate the vote. Is that another AI layer making judgment calls? Or a human reviewing every output before it ships?

Either way, you've added a new layer of work. That's not automation. That's the orchestration tax.

If I'm paying for intelligence, why am I also paying for the reconciliation layer that makes that intelligence usable?

Why Multi-Agent Systems Break Down in Enterprise Sales

The appeal of multi-agent orchestration is intuitive. Break the problem into components. Specialize each agent. Assemble the output. It's how humans work in large organizations. Why wouldn't it work for AI?

Because AI doesn't have the implicit context humans carry between conversations.

When your Head of Sales talks to your Head of Enablement, they both understand the competitive landscape, the deal history, the strategic priorities. They don't need an orchestration layer to reconcile their perspectives — they're operating from shared context.

Multi-agent systems don't have shared context. Each agent operates on a snapshot of data optimized for its narrow function. The orchestration layer is trying to recreate the shared context humans have natively — after the fact, every time, with incomplete information.

The alternative is to build context into the architecture from the start. One agent with full access to every meeting, email, CRM update, and document across every deal. Not a snapshot — a video. Time-series data that shows how deals change over time, stacked against every other deal in the pipeline.

Most teams building multi-agent systems are solving the wrong problem. They're optimizing for task decomposition when they should be optimizing for judgment under uncertainty.

Three Scenarios Where the Orchestration Tax Shows Up

Scenario 1: When Agents Disagree on a $500K Deal

You're working a $500K enterprise deal. The AI system drafts an executive brief that misreads a stakeholder dynamic — framing cost savings to a VP who cares about innovation velocity, or pitching operational efficiency to someone measured on strategic transformation.

In a multi-agent system, which agent got it wrong? The research agent that profiled the stakeholder? The content agent that chose the framing? The orchestration layer that weighted one input over another?

More importantly: can your rep explain to the buyer why the system made that choice? Can they course-correct with confidence, or are they selling a black box they don't understand themselves?

Enterprise buyers are sophisticated. When they ask 'how does this work?' and your rep can't explain the reasoning, trust evaporates. The orchestration tax here isn't measured in hours — it's measured in deals.

Scenario 2: When Generic Content Reaches a Sophisticated Buyer

Your champion forwards your business case to their CFO. The CFO has seen dozens of AI-generated documents this quarter. They can spot the patterns: generic value propositions, templated ROI calculations, messaging that sounds like it came from a vendor, not a trusted advisor.

A single-agent system with full deal context knows this CFO capped projections at 8% in the last three business cases they approved. A multi-agent content generator doesn't — it's optimized for the generic CFO persona, not this specific stakeholder in this specific deal.

The orchestration tax here is invisible until the deal stalls. Your content looked right. It just wasn't right for this person, in this deal, at this moment. And no individual agent in the system knew enough to catch it.

Scenario 3: The Maintenance Burden Nobody Budgeted For

Your business changes. A new competitor enters the market. Your messaging shifts. A new sales methodology gets rolled out.

How many agents need to be retrained? How many prompts need to be rewritten? Who on your team owns that ongoing recalibration?

In a multi-agent system, every agent needs independent updating. The research agent needs new competitive intelligence. The content agent needs new messaging frameworks. The outreach agent needs new sequencing logic. That's three separate maintenance streams — and three separate failure points.

In a single-agent system trained on outcomes, the system learns from what's working. When deals close or stall, the patterns update automatically. No prompt engineering required.

Are you buying intelligence that gets smarter over time, or infrastructure that requires a dedicated team to maintain?

The Risk Profile Question

Not all AI architectures carry the same risk. The right choice depends entirely on what failure costs you.

For high-volume SMB sales — short cycles, low ACVs, high account counts — black box autonomous systems optimize for speed and volume. Get it wrong on one account? Move to the next. The orchestration tax is manageable because the cost of any individual failure is low.

For enterprise accounts — 200 strategic accounts, 11 to 22 stakeholders per deal, relationships that took years to build — the calculus inverts completely. You can't afford to burn a relationship because an agent did something you didn't expect and couldn't control. You need to see the reasoning behind every judgment call.

The problem is most platforms are built for the former and marketed to the latter.

Transparency as a Business Requirement

Enterprise sales is built on trust. Your rep earns trust with the buyer. Your buyer earns trust with their internal stakeholders. That trust compounds — or it breaks.

Black box autonomy breaks this chain. Your rep becomes a messenger for recommendations they don't understand. When the buyer asks 'why this approach?' the rep has no answer. Trust fractures.

White box transparency preserves the chain. The system shows its reasoning: 'I'm recommending this because seven of ten similar deals stalled at this stage when we didn't engage the CFO early. Here's what worked in the deals that closed.' The rep understands the reasoning. The buyer sees the judgment. Trust compounds.

The question isn't whether AI can do the work. It's whether AI can do the work in a way that makes your team smarter, your buyers more confident, and your judgment more defensible.

The Real Product Isn't the Content

Here's what sophisticated buyers already understand: they can estimate the token count. They can see the API calls. They know what it costs to generate a document.

When the production process is transparent and reproducible, pricing power evaporates.

What buyers can't reproduce is judgment. The accumulated context that drives nuanced decisions based on high-stakes outcomes. The pattern recognition that says 'this deal looks like these seven others, and here's what worked.'

You don't pay a lawyer $1,000 an hour for the documents. You pay for the judgment behind them — the foresight of what's likely to happen, the sequencing of decisions, the recognition of patterns across hundreds of similar situations.

The question for enterprise sales leaders evaluating AI isn't 'can it write a business case?' It's 'can it make the judgment call about which business case to write, for which stakeholder, at which stage in the deal?'

How to Evaluate AI Sales Platforms: The Questions to Ask

On orchestration: When your agents produce conflicting recommendations, what resolves the conflict? Human review, another AI layer, or a defined rule? What does that resolution cost in time?

On transparency: Can your rep see why the system recommended a specific play — not just what it recommended? Can they explain that reasoning to a buyer?

On context: Does the system work from a snapshot of each deal or a full time-series history? Does it know how this specific stakeholder responded three months ago?

On maintenance: When your messaging changes, how many agents need to be updated? Who owns that? What's the engineering cost?

On learning: When deals close or stall, does the system learn from those outcomes automatically? Or does improving the output require your team to rewrite prompts?

If the AI does something unexpected in your most strategic deal, can you afford the time it takes to figure out why? If the answer is no, you need transparency built into the architecture — not bolted on afterward.

Ready to See How Olli Handles It Differently?

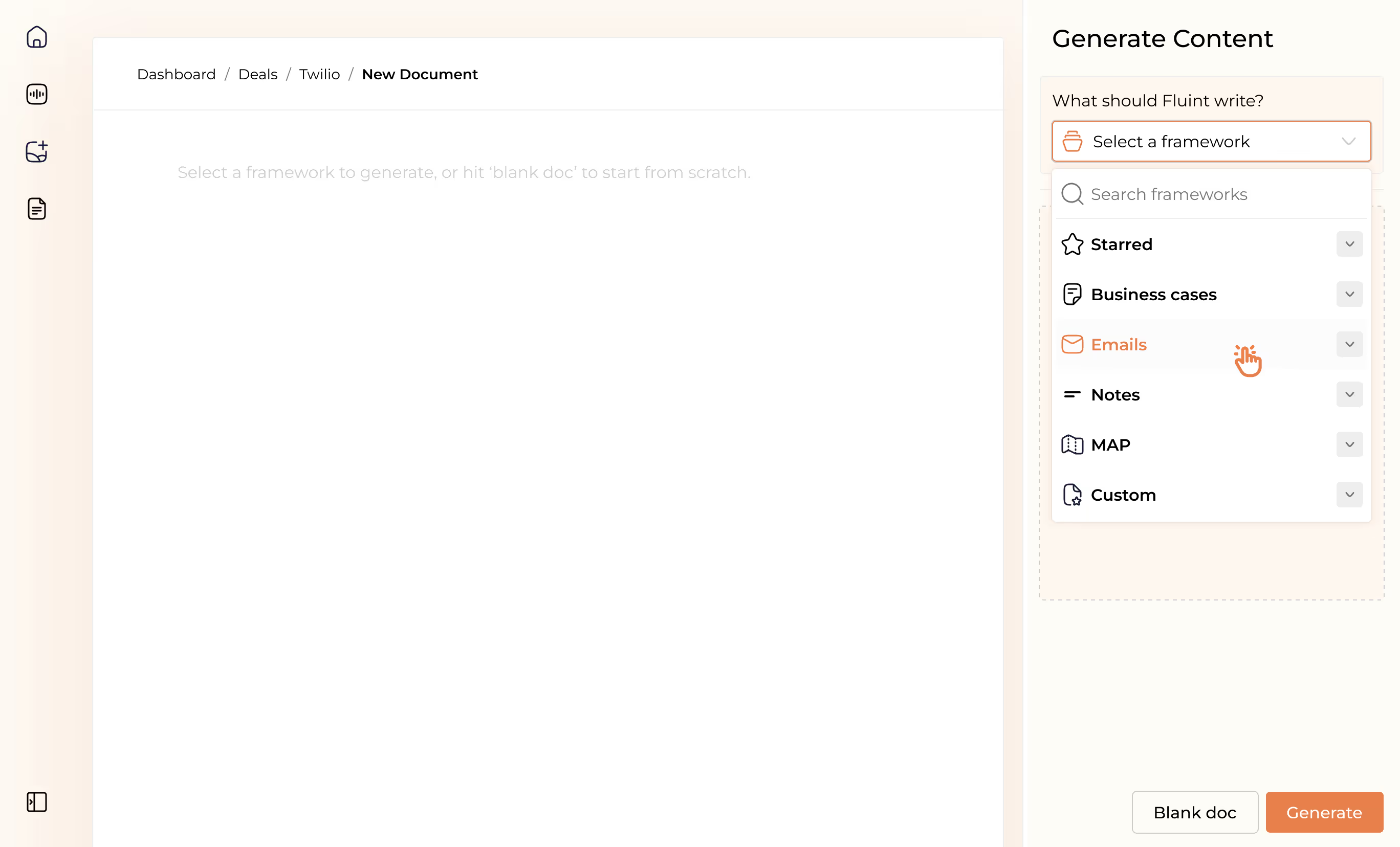

Olli is Fluint's AI sales agent built for enterprise reps working complex deals with multiple stakeholders. Unlike multi-agent platforms, Olli operates as a single agent with full deal context — synthesizing every meeting, email, and document to make judgment calls your rep can see, explain, and trust.

No orchestration layer. No reconciliation tax. Just context, transparency, and intelligence that gets smarter as your deals close.

FAQ's on:

The orchestration tax is the hidden reconciliation work created when multiple AI agents disagree. In a multi-agent system, separate agents handle research, content, and sequencing — each optimized for its narrow function, each working from a different snapshot of deal data. When they produce conflicting outputs, someone has to resolve the conflict before anything ships. That resolution layer is work that wasn't in the original automation pitch. It's overhead that compounds as your team scales.

Multi-agent systems don't share context. Each agent operates on a snapshot of data optimized for its own function — the research agent sees one version of the deal, the content agent sees another. Without a shared, continuous view of the deal, agents regularly surface different priorities, different stakeholder reads, and different recommended plays. A single-agent system avoids this by maintaining full context across every interaction in the deal from the start.

For enterprise accounts, the most important criteria are transparency, context depth, and maintenance burden. Transparency means your rep can see why the system recommended a specific play. Context depth means the system synthesizes the full history of a deal, not just the most recent activity. Maintenance burden means understanding how many agents need to be updated when your messaging changes, and who owns that work. The tell: if the AI does something unexpected in your most strategic deal, how long does it take to figure out why?

Multi-agent systems break the sales workflow into components and assign a specialized agent to each. The tradeoff is context: each agent works from a snapshot, and an orchestration layer tries to reconcile their outputs. Single-agent systems maintain full context across every meeting, email, CRM update, and document in a deal, making judgment calls from a complete picture without a reconciliation layer. For enterprise deals where relationships and context are irreplaceable, single-agent architecture produces more defensible, deal-specific outputs.

Yes — for the right motion. High-velocity SMB sales with standardized processes, short cycles, and low cost-per-deal are well-suited to multi-agent systems. The failure cost is low, the volume is high, and the orchestration overhead is manageable relative to the throughput. The problem is that most multi-agent platforms are built for this motion and marketed to enterprise teams who need something different. Before evaluating any platform, be clear on what failure costs you — that question determines which architecture fits.

Why stop now?

You’re on a roll. Keep reading related write-up’s:

Draft with one click, go from DIY, to done-with-you AI

Get an executive-ready business case in seconds, built with your buyer's words and our AI.

Meet the sellers simplifying complex deals

Loved by top performers from 500+ companies with over $250M in closed-won revenue, across 19,900 deals managed with Fluint

Now getting more call transcripts into the tool so I can do more of that 1-click goodness.

The buying team literally skipped entire steps in the decision process after seeing our champion lay out the value for them.

Which is what Fluint lets me do: enable my champions, by making it easy for them to sell what matters to them and impacts their role.